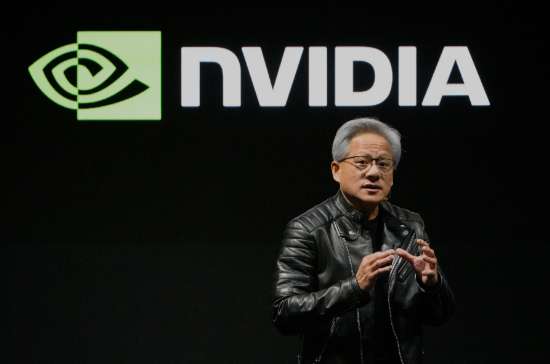

This 'Hidden Business' Of NVIDIA, Will Be The Market's Focal Point In 2 Weeks

When NVIDIA (NVDA) releases its second-quarter earnings on August 27, investors will be closely focused on the performance of its data center business. After all, the chip giant has achieved revenue g

When NVIDIA (NVDA) releases its second-quarter earnings on August 27, investors will be closely focused on the performance of its data center business. After all, the chip giant has achieved revenue growth in this segment by selling its high-performance AI processors.

But the data center division's business extends far beyond chip sales. It also includes some of NVIDIA's often-overlooked yet critical products: its networking technologies.

NVIDIA's networking portfolio includes NVLink, InfiniBand, and Ethernet solutions—technologies that enable its chips to communicate with each other, connect servers within large data centers, and ultimately ensure end-users can access everything to run AI applications.

Gilad Shainer, NVIDIA's Senior Vice President of Networking, explained: “The most important part in building a supercomputer is the infrastructure. The most important part is how you connect those computing engines together to form that larger unit of computing.”

This has translated into substantial sales. In the last fiscal year, NVIDIA's total data center revenue reached $115.1 billion, with networking sales accounting for $12.9 billion. While that figure might seem modest compared to the $102.1 billion in chip sales, it still surpassed the annual revenue of NVIDIA's second-largest business segment—gaming, which brought in $11.3 billion.

In the first quarter, NVIDIA's data center revenue was $39.1 billion, with networking contributing $4.9 billion. And as customers—whether research universities or large-scale data centers—continue scaling up their AI computing power, networking is poised for further growth.

“It is the most underappreciated part of NVIDIA's business, by orders of magnitude,” Deepwater Asset Management managing partner Gene Munster said in an interview: “Basically, networking doesn't get the attention because it's 11% of revenue. But it's growing like a rocket ship.”

Connecting Thousands of Chips

Kevin Deierling, NVIDIA's Senior Vice President of Networking, explained that in the era of the AI boom, the company must address three different types of networking. The first is NVLink technology, which connects GPUs within a server or across multiple servers in large rack-mounted systems, enabling them to communicate and enhance overall performance.

Next is InfiniBand, which links multiple server nodes within a data center, effectively forming one massive AI computer. Then there's the front-end network for storage and system management, which relies on Ethernet connections.

However, the purpose of all these connections isn't just to help chips and servers communicate. They're designed to enable these devices to exchange data at the fastest possible speeds. If you're trying to run a cluster of servers as a single computing unit, their communication must happen in an instant.

Insufficient data flow to the GPUs can slow down the entire computation process, delay other operations, and reduce the overall efficiency of the data center.

Munster elaborated: “[NVIDIA is a] very different business without networking. The output that the people who are buying all the NVIDIA chips are desiring wouldn't happen if it weren't for their networking."

And as companies continue developing larger AI models—along with autonomous and semi-autonomous agents capable of performing tasks for users—ensuring these GPUs work in harmony is becoming increasingly critical.

This is especially true as “inference”—the process of running AI models—demands more powerful data center systems.

The Growing Importance of Inference

The AI industry is undergoing a major shift centered around the concept of inference. In the early days of the AI boom, it was believed that training AI models required ultra-powerful supercomputers, while actually running these models required relatively modest computing power.

Earlier this year, DeepSeek claimed it had trained AI models using non-top-tier NVIDIA chips, sparking some concerns on Wall Street. At the time, the thinking was that if companies could train and run AI models on less powerful chips, there would be no need for NVIDIA's expensive high-performance systems.

But this argument was quickly debunked, as chip companies pointed out that AI models perform better on high-performance AI computers, processing more information faster than they could on inferior systems.

“It turns out that it's starting to look more and more like training as we get to an agentic workflow. So all of these networks are important. Having them together, tightly coupled to the CPU, the GPU, and the DPU [data processing unit], all of that is vitally important to make inferencing a good experience.”

However, NVIDIA's competitors are circling. AMD is looking to grab more market share, while cloud giants like Amazon, Google, and Microsoft continue developing their own AI chips.

Alvin Nguyen, an analyst at Forrester Research, noted that industry groups also have competing networking technologies, including UALink—a direct challenger to NVLink.

For now, though, NVIDIA remains in the lead. And as tech giants, research institutions, and enterprises continue scrambling for NVIDIA's chips, its networking business is almost certain to keep growing.

Disclaimer: The views in this article are from the original Creator and do not represent the views or position of Hawk Insight. The content of the article is for reference, communication and learning only, and does not constitute investment advice. If it involves copyright issues, please contact us for deletion.